HOW YOUR OWN DNA COULD SOMEDAY SAVE YOUR VISIONImagine living with a genetic disease that could cause blindness in your 40s—and your doctor tells you there are no treatment options. It's for patients like these that Johnson & Johnson is harnessing cutting-edge technology in the hope of finding real solutions.

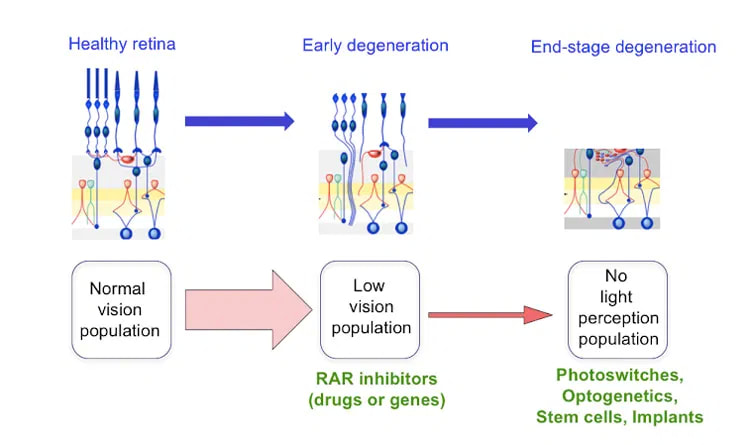

By Hallie Levine October 11, 2021 It can be devastating to watch someone slowly lose their vision. Just ask James List, M.D., Ph.D., Global Therapeutic Area Head of Cardiovascular, Metabolism and Retina at Janssen Research & Development, part of the Janssen Pharmaceutical Companies of Johnson & Johnson. Before he joined the company in 2014, the Harvard endocrinologist spent his days counseling patients with diabetes. “Many people don’t realize that diabetes can cause irreversible damage to multiple organs, including the eyes,” he says. READ MORE  Aimee Volk, MS, OTR/L, Vision and Independent Living Services Administrator for North Dakota Vocational Rehabilitation Human Services has been elected as a Director for Eye-Link North Dakota. Aimee has a true desire for helping individuals coping with substantial sight loss regain and maintain their independence. Aimee began her career in Occupational Therapy in 2010 working in various settings of the hospital including ICU, rehabilitation, and acute care. Aimee soon realized her passion was with vision. In 2017, Aimee began a new journey as the Vision and Independent Living Services Administrator for the North Dakota Division of Vocational Rehabilitation. Aimee’s educational background includes a Bachelor’s of University Studies, a Master’s degree in Occupational Therapy (2009) from the University of Mary in Bismarck, ND, and a Graduate Certificate in Low Vision (2020) from the University of Alabama at Birmingham. Aimee currently resides in Bismarck, ND with her husband Troy, daughters Paighton and Mallana, and three dogs. Eye-Link welcomes the leadership and experience Aimee brings to the North Dakota Board. People with deteriorating vision could see better and retain useful vision longer if new therapies developed at UC Berkeley work as well in humans as they do in mice. (Wikipedia image) Millions of Americans are progressively losing their sight as cells in their eyes deteriorate, but a new therapy developed by researchers at the University of California, Berkeley, could help prolong useful vision and delay total blindness. The treatment — involving either a drug or gene therapy — works by reducing the noise generated by nerve cells in the eye, which can interfere with vision much the way tinnitus interferes with hearing. UC Berkeley neurobiologists have already shown that this approach improves vision in mice with a genetic condition, retinitis pigmentosa, that slowly leaves them blind. Reducing this noise should bring images more sharply into view for people with retinitis pigmentosa and other types of retinal degeneration, including the most common form, age- related macular degeneration. “This isn’t a cure for these diseases, but a treatment that may help people see better. This won’t put back the photoreceptors that have died, but maybe give people an extra few years of useful vision with the ones that are left,” said neuroscientist Richard Kramer, a professor of molecular and cell biology at UC Berkeley. “It makes the retina work as well as it possibly can, given what it has to work with. You would maybe make low vision not quite so low.” Kramer’s lab is testing drug candidates that already exist, he said, though no one suspected that these drugs might improve low vision. He anticipates that the new discovery will send drug developers back to the shelf to retest these drugs, which interfere with cell receptors for retinoic acid. Many such drug candidates were created by pharmaceutical companies in the failed hope that they would slow the development of cancer. “There has been a lot of excitement about emerging technologies that address blinding diseases at the end stage, after all of the photoreceptors are lost, but the number of people who are candidates for such heroic measures is relatively small,” Kramer said. “There are many more people with impaired vision — people who have lost most, but not all, of their photoreceptors. They can’t drive anymore, perhaps they can’t read or recognize faces, all they have left is a blurry perception of the world. Our experiments introduce a new strategy for improving vision in these people.” Kramer and his UC Berkeley colleagues reported their results this week in the journal Neuron. ‘Ringing in the eyes’ Researchers have known for years that the retinal ganglion cells, the cells that connect directly with the vision center in the brain, generate lots of static as the light sensitive cells — the photoreceptors — begin to die. This happens in inherited diseases such as retinitis pigmentosa, which afflicts about one in 4,000 people worldwide, but it may also occur in the much larger group of older people with age-related macular degeneration, a disease that affects the crucial part of the retina needed for precise vision. The sharp edges of an image are drowned in such static, and the brain is unable to interpret what’s seen. As the cells in a healthy retina (left) slowly die in degenerative diseass such as retinitis pigmentosa, different types of therapies may be needed. Richard Kramer is working on ways to improve low vision (middle) by blocking retinoic acid receptors (RAR), but is also exploring photoswitch and optogenetic therapies for those who are totally blind (right). (Richard Kramer image)

Kramer focused on the role of retinoic acid after he heard that it was linked to other eye changes resulting from retinal degeneration. The dying photoreceptors — the rods, sensitive to dim light, and the cones, needed for color vision — are packed with proteins called an opsins. Each opsin combines with a molecule of retinaldehyde, to form a light-sensitive protein called rhodopsin. “There are 100 million rods in the human retina, and each rod has 100 million of these sensors, each one sequestering retinaldehyde,” he said. “When you start losing all those rods, all that retinaldehyde is now freely available to get turned into other things, including retinoic acid.” Kramer and his team found that retinoid acid — well-known as a signal for growth and development of embryos — floods the retina, stimulating the retinal ganglion cells to make more retinoic acid receptors. It’s these receptors that make ganglion cells hyperactive, creating a constant buzz of activity that submerges the visual scene and prevents the brain from picking out the signal from noise. “When we inhibit the receptor for retinoic acid, we reverse the process and shut off the hyperactivity. People who are losing their hearing often get tinnitus, or ringing in the ears, which only makes matters worse. Our findings suggest that retinoic acid is doing something similar in retinal degeneration — essentially causing ‘ringing in the eyes,’” Kramer said. “By inhibiting the retinoic acid receptor, we can decrease the noise and unmask the signal.” The researchers sought out drugs known to block the receptor and showed that treated mice could see better, behaving much like mice with normal vision. They also tried gene therapy, inserting into ganglion cells a gene for a defective retinoic acid receptor. When expressed, the defective receptor bullied out the normal receptor in the cells and quieted their hyperactivity. Mice treated with gene therapy also behaved more like normal, sighted mice. Ongoing experiments suggest that the brain, too, responds differently once the receptor is blocked, showing activity closer to normal. While Kramer continues experiments to determine how retinoic acid makes the ganglion cells become hyperactive and how effective the inhibitors are at various stages of retinal degeneration, he is hopeful that the research community will join the effort to repurpose drugs originally developed for cancer into therapies for improving human vision. Kramer’s co-authors on the paper are first authors Michael Telias, Bristol Denlinger and Zachary Helft, and their UC Berkeley colleagues, Casey Thornton and Billie Beckwith-Cohen. The work was funded by the National Eye Institute, Thome Foundation and Foundation for Fighting Blindness.  Microsoft’s Seeing AI is an app that lets blind and limited-vision folks convert visual data into audio feedback, and it just got a useful new feature. Users can now use touch to explore the objects and people in photos. It’s powered by machine learning, of course, specifically object and scene recognition. All you need to do is take a photo or open one up in the viewer and tap anywhere on it. “This new feature enables users to tap their finger to an image on a touch-screen to hear a description of objects within an image and the spatial relationship between them,” wrote Seeing AI lead Saqib Shaikh in a blog post. “The app can even describe the physical appearance of people and predict their mood.” Because there’s facial recognition built in as well, you could very well take a picture of your friends and hear who’s doing what and where, and whether there’s a dog in the picture (important) and so on. This was possible on an image-wide scale already, as you can see in this image: But the app now lets users tap around to find where objects are — obviously important to understanding the picture or recognizing it from before. Other details that may not have made it into the overall description may also appear on closer inspection, such as flowers in the foreground or a movie poster in the background. In addition to this, the app now natively supports the iPad, which is certainly going to be nice for the many people who use Apple’s tablets as their primary interface for media and interactions. Lastly, there are a few improvements to the interface so users can order things in the app to their preference. Seeing AI is free — you can download it for iOS devices here.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

December 2023

Categories |

RSS Feed

RSS Feed